OpenAI has been one of the most talked about businesses since last week for all the wrong reasons. The company fired its founder and CEO Sam Altman, and later rehired him in the same position after huge pressure from its ace investor Microsoft. While the saga was just settling down, ChatGPT users have now started complaining that OpenAI is deliberately slowing down the service.

Several users of ChatGPT have taken Reddit and Twitter by storm reporting that the latest GPT4 Turbo has become lazy, and does not respond to prompts as it used to before. It is speculated that OpenAI is nerfing ChatGPT to avoid its misuse.

ChatGPT Is Becoming Lazy, Say Users

The primary complaint from users is that ChatGPT is not responding to questions in-depth, as it used to a few months back. A user named Eric Hartford asked ChatGPT to develop a streaming client using Kotlin and OpenAI APIs. However, instead of writing the code, ChatGPT gave him steps on how to solve the problem rather than actually solving the problem.

Wow GPT4 has been seriously nerfed. I just tried interactively developing a bit of code, something that worked perfect 2 weeks ago, and it resisted and acted lazy. Ay, I'm going to have to turn to open models for coding… (maybe a good thing tho)https://t.co/KFSz2md4Dr

— Eric Hartford (@erhartford) November 28, 2023

Eric took this matter to Twitter where he said that this was clearly not the case a few weeks back, where ChatGPT would directly start writing the code whenever asked. Furthermore, when Eric specifically asked ChatGPT to write code, the AI bot apparently returned incomplete sets of code.

OpenAI has safety-ed GPT-4 sufficiently that its become lazy and incompetent.

Convert this file? Too long. Write a table? Here's the first three lines. Read this link? Sorry can't. Read this py file? Oops not allowed.

So frustrating.

— rohit (@krishnanrohit) November 28, 2023

Another user named Rohit posted on Twitter that ChatGPT has become lazy. He says that the service refuses to complete lengthy tasks and instead takes a shortcut. When Rohit asked ChatGPT to write a table from some data, the bot only wrote the first three lines and asked him to complete the rest. It also appears to reject reading certain files, which used to work fine earlier as per his post.

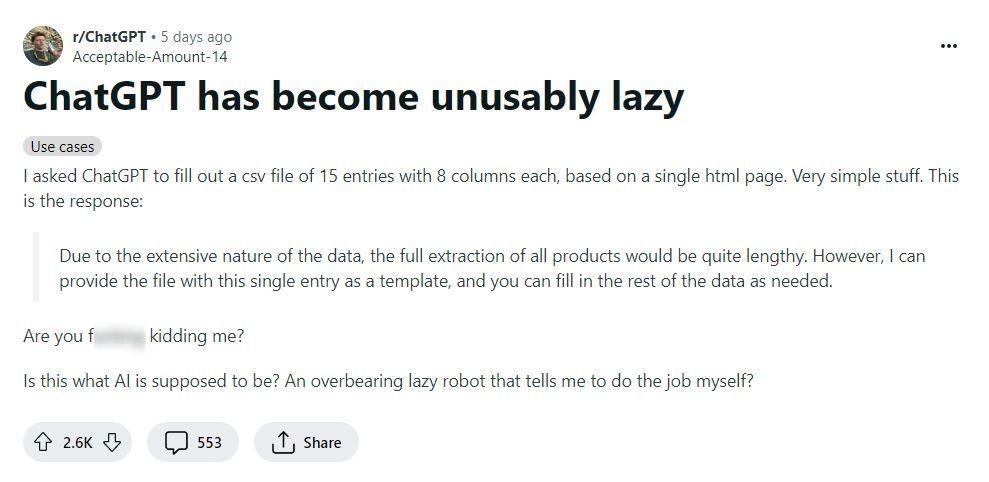

Similar complaints have also emerged on Reddit, where several users are reporting that ChatGPT refuses to complete even simple tasks. Reddit user Acceptable-Amount-14 asked ChatGPT to fill a CSV file of 15 entries with 8 columns each. While the task was relatively easy, ChatGPT replied that the given data was too much to process and the result would be lengthy.

As more complaints started rising online, OpenAI’s API Product Manager Owen Campbell Moore replied to a user on Twitter that such cases are a bug. The company is working to fix this. However, he further added some sarcasm to his reply mentioning how crazy it is when a bot asks you to develop your own code.

It is possible that Owen was being friendly with the user and trying to relate to his frustration. But on the flip side, there is also a chance that Owen may have hinted that users are pushing ChatGPT too much, and the short response behaviour from the bot is normal.

This is a bug, we’re working on it!

(Driving me crazy too, like I’m supposed to write my own code??? C’mon now.)

— Owen Campbell-Moore ✪ (@owencm) November 29, 2023

Hence, it is still not clear whether OpenAI is purposefully nerfing ChatGPT, or are we expecting too much from the AI-powered bot.

Are Humans Getting Lazy Due to ChatGPT?

While a big set of users is complaining about ChatGPT slowing down, some say that we are getting too dependent on the service. It is possible that ChatGPT made us so comfortable that some of us no longer bother to think about solutions on our own.

However, another possibility for a potential slowdown of ChatGPT could be the exponential growth of its users. Remember that ChatGPT is an AI bot and requires huge GPU computing resources to function. Given the increasing number of users, it is possible that the company is running out of bandwidth to provide the best experience to all users, resulting in fewer in-depth responses.

We still don’t know the exact truth behind this. Despite the rising number of complaints from users, OpenAI has not officially acknowledged the situation yet.